The Drift exploit and Stabble’s precautionary warning point to a difficult crypto security problem: the next major breach may begin long before funds move on-chain.

That is what makes these incidents more than isolated alarms. They suggest that some protocols may still be looking for smart contract flaws, while the real exposure lies in hiring, access, governance, and trusted relationships.

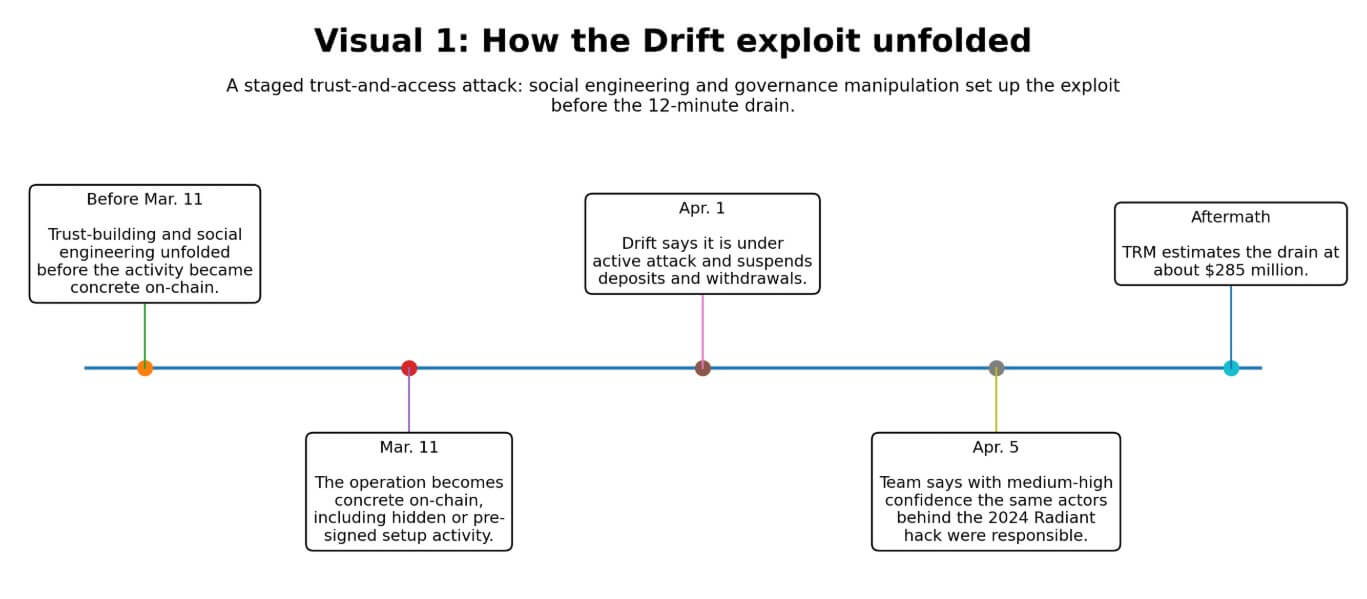

On Apr. 1, Drift suspended deposits and withdrawals and told users it was under an active attack.

By Apr. 5, the team said with medium-high confidence that the same threat actors behind the October 2024 Radiant Capital hack had executed the operation.

TRM Labs estimated the drain at approximately $285 million, and the Drift post-mortem described a complex scheme in which individuals used $1 million of their own capital and met in person with Drift team members to infiltrate the protocol’s structure.

On the technical side, TRM identified the critical weakness as social engineering of multisig signers combined with a zero-timelock Security Council migration. This governance design enabled attackers to execute privileged actions without the delays intended to catch unauthorized changes.

Elliptic said the laundering patterns and network indicators matched those of prior DPRK-attributed operations and pointed to a probable compromise of administrator keys that enabled privileged withdrawals and administrative control.

Attackers earned enough trust to convert ordinary access into a 12-minute, $285 million drain.

On Apr. 7, the Solana-based liquidity protocol Stabble told its liquidity providers to withdraw funds as a precaution.

The new team that recently acquired the protocol said it had discovered that a former CTO appeared to be the same person ZachXBT had publicly flagged as a North Korean IT worker.

The protocol promised new audits before resuming operations. What Stabble demonstrated was that alleged insider exposure now moves users fast enough to constitute a live funds event on its own.

The operating manual already exists

Treasury’s Mar. 12 sanctions release put numbers on the problem: DPRK IT-worker fraud schemes generated nearly $800 million in 2024, using fraudulent documents, stolen identities, and fabricated personas.

The Department of Justice separately said North Korean operatives obtained employment at more than 100 US companies using fake and stolen identities. In one Atlanta blockchain R&D case, workers stole more than $900,000 in virtual currency.

These were workforce infiltrations sustained across multiple firms over extended periods.

Flare and IBM X-Force published their operational breakdown on Mar. 18. The research describes a tiered structure of recruiters, facilitators, IT workers, and collaborators who assist with identity verification and onboarding.

Once embedded, operatives use remote access tools, VPN and proxy services, and internal communication channels, leaving detectable but often-missed traces in device logs.

Flare and IBM frame this as a shared problem owned jointly by security teams and HR, requiring coordination across hiring, onboarding, access controls, and offboarding disciplines.

| Stage | Who is involved | What happens | What the warning sign looks like | Why crypto teams miss it |

|---|---|---|---|---|

| Recruitment / identity fabrication | Recruiters, facilitators, fake applicants, collaborators | Operatives build false personas using fraudulent documents, stolen identities, and fabricated employment histories to get through screening | Inconsistent biographical details, thin digital footprint, identity mismatches, suspicious references | Teams optimize for speed and technical talent, not adversarial hiring review |

| Hiring / onboarding | HR, hiring managers, collaborators / brokers, IT workers | Collaborators help candidates pass identity verification, background checks, and onboarding steps | Unusual help during onboarding, documentation anomalies, device / location inconsistencies | Hiring and security often operate separately, so no single team sees the whole pattern |

| Embedding inside teams | IT workers, managers, coworkers, contractors | Once hired, operatives establish legitimacy over time through routine work and trusted relationships | Heavy use of VPNs / proxies, unusual remote-access patterns, odd device logs, limited willingness for direct interaction | Normal remote-work behavior can mask the indicators, and smaller teams lack monitoring depth |

| Access accumulation | Developers, admins, signers, governance operators | Trusted insiders gain permissions, signer influence, admin access, or visibility into sensitive workflows | Permission creep, over-broad role access, weak separation of duties, dormant approvals sitting in place | Crypto security is often code-centric, so human access design gets less scrutiny than smart contracts |

| Exploitation / theft or extortion | Compromised insiders, external handlers, laundering networks | Attackers convert ordinary access into privileged withdrawals, governance actions, key compromise, or post-access theft | Sudden use of privileged functions, suspicious governance migrations, unusual withdrawal behavior, emergency pauses | By the time on-chain activity looks abnormal, the trust failure happened much earlier |

| Post-incident response | Protocol teams, users, auditors, investigators | Teams pause operations, ask users to withdraw, rotate access, commission audits, and investigate exposure | Precautionary withdrawal warnings, audit resets, access reviews, attribution updates | Most protocols do not have mature playbooks for insider-risk containment and offboarding |

Reuters reported on Mar. 31 that a North Korea-linked operation compromised the widely used Axios npm package in a supply chain attack that could have affected millions of environments.

The actor behind that compromise, UNC1069, is distinct from UNC4736, the cluster Drift tied to the Radiant hack. Yet both cases exploit a trusted relationship comprising a trusted person, a trusted signer, and a trusted package before touching funds or systems.

What to expect

The bear case runs through what Drift’s staging timeline exposes about latent exposure across DeFi.

If attackers spent from Mar. 11 to Apr. 1 embedding pre-signed authorizations and engineering approvals before executing the drain, this adds to months of complex social engineering. Other protocols may already host compromised signers, contractors, or contributors they have yet to identify.

Stabble’s situation, where a suspected link to a flagged identity surfaced in ZachXBT’s public research before the team’s own controls caught it, illustrates how often organizations learn about their own exposure from the outside.

Treasury’s $800 million figure for a single year puts a floor on the threat’s already cost. DOJ’s 100-plus-company figure suggests the target distribution is broad.

In that environment, the next major loss may already be inside the perimeter, waiting on a governance window or an admin key rotation.

The bull case is grounded in the sector’s capacity to adapt once the threat model becomes concrete. Drift is the concrete proof, and the countermeasures are well documented.

Protocols can add timelocks to governance migrations, reduce signer powers, segment permissions across functions, and treat onboarding as a security checkpoint with the rigor applied to code audits.

Flare and IBM supply the operational framework: verify identity aggressively, monitor device logs and remote-access indicators, segment contractor access, and build offboarding discipline that revokes credentials and signing authority on exit. The zero-timelock governance design identified by TRM as central to Drift’s exploit is fixable.

Protocols that fix it and add organizational controls alongside it materially narrow the attack surface.

If Drift becomes a forcing event, as the 2016 DAO hack did, forcing a reckoning with smart contract risk, the sector could close the gap between known DPRK tactics and actual defenses within a reasonable window.

The harder constraint on the bull case is institutional habit. Crypto teams built their security culture around audits, bounty programs, and formal verification.

Adding identity verification, access minimization, device controls, signer separation, and HR security coordination demands a different operating posture, one that most small-to-medium protocols have yet to build.

The market will price this in, with protocols that demonstrate governance hygiene and operational controls attracting a trust premium.

| Scenario | What drives it | What happens inside protocols | Market consequence | What stronger teams do differently |

|---|---|---|---|---|

| Bear case: latent exposure is already inside the perimeter | Drift’s long staging timeline suggests other protocols may already host compromised signers, contractors, or contributors | Teams discover exposure late, often after external research, suspicious activity, or a live incident | More precautionary pauses, user withdrawals, TVL fragmentation, and a trust discount on smaller protocols | Tighten signer controls, add timelocks, rotate credentials faster, segment permissions, and audit org access as aggressively as code |

| Bull case: Drift becomes a forcing event | The sector treats Drift as a structural wake-up call, not an isolated hack | Protocols upgrade governance design, identity verification, onboarding checks, device monitoring, and offboarding discipline | Confidence gradually stabilizes, with better-defended protocols recovering trust faster | Add timelocks to governance changes, minimize access, verify identities aggressively, and integrate HR with security operations |

| Trust-premium case: market rewards operational security | Users and capital begin distinguishing between audited code and audited organizations | Protocols that can prove governance hygiene and access discipline attract stickier users and counterparties | A premium emerges for teams with visible controls; weaker teams face higher skepticism and slower liquidity return | Publish clearer security processes, separate signer roles, document offboarding, monitor remote-access indicators, and show repeatable operational hygiene |

| Stagnation case: the threat is known but habits do not change fast enough | Small and mid-sized teams keep relying mainly on audits, bounties, and formal verification | Code security improves, but hiring, access, and trusted-software gaps remain open | Repeated “surprise” incidents keep resetting confidence and raising the cost of trust | Treat non-code controls as part of core protocol security, not as an optional compliance layer |

The gap above the code layer

Treasury, DOJ, Flare, IBM, TRM, and Elliptic are each, in different ways, pointing to the same structural gap: smart contract audits address only the code layer.

Who holds signing keys, who vouches for contractors, who reviews device logs, and who has the authority to push a governance migration without a timelock are steps that live above that layer. The current generation of security tooling barely reaches it.

The next exploit may begin with a hiring decision, contractor onboarding, a trusted npm package, or a signer who, over months, earned enough confidence to authorize the one transaction that mattered.

Protocols that close that gap before the next attribution update lands will still have their users’ trust when it does.

The post After the $285M Drift hack, new Solana scare shows crypto’s next security risk may already be inside appeared first on CryptoSlate.